When you have signed up for AddSearch, you have an account. The account links to a demo index you can use for checking out how the search works.

You need to create a search index for your account to use your content in the search. Adding the search index also creates the settings and statistics and allows for adding users and subscribing to AddSearch’s plans.

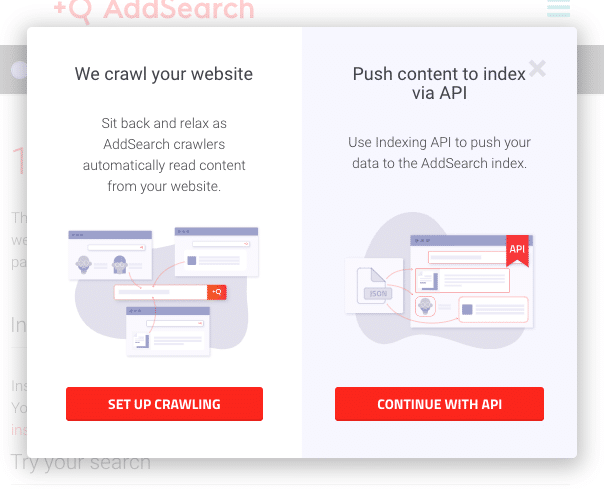

When creating a search index for your account, the AddSearch dashboard provides you with a choice between the crawler and API indices. Which one should you choose?

If you want an index where we automatically collect the content from your website, the crawling index is for you.

If you have the technical expertise and want to push documents to the index with the indexing API endpoint, the API index is for you.

If you are interested in more information about the two types of indices, continue reading.

Crawling index

The crawling index requires you to add your website for crawling, after which our crawlers search and collect the links to the web pages from the website. The content of the web pages is collected and stored in the search index.

In addition to collecting links from the web pages, AddSearch collects links from sitemaps named sitemap.xml and located in the site root.

The crawling index also allows for indexing dynamically generated content, referred to as Ajax crawling supported by the Professional, Premium, and Enterprise plans.

When you have chosen the crawling index option and added your website for indexing, your website will be indexed automatically. You can check the status from the Dashboard page of the AddSearch dashboard. For more information on the indexing statuses please visit the documentation.

If we haven’t been able to index any pages from your website visit here for troubleshooting or contact our support at [email protected]

API index

API index requires you to push the contents to the search index with the indexing API. As stated, the API index requires technical expertise. Please visit our reference page for the indexing API for more information.

In addition to using the API index in site search implementations, the API index allows for creating indices in other instances such as mobile or desktop applications.